HPE GreenLake Virtual Assistant

Architecting an intelligent enterprise assistant

Overview

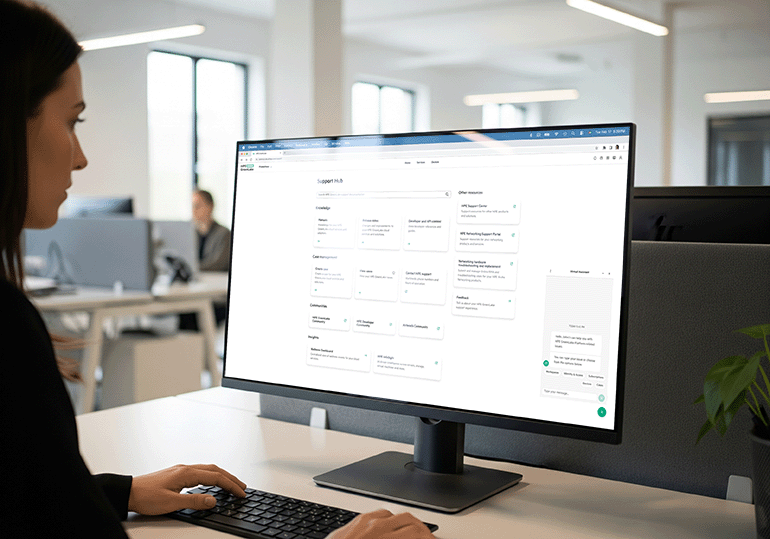

As the Lead Product Designer for the native HPE GreenLake Virtual Assistant, I architected a conversational UI from the ground up to shift enterprise support from static documentation to dynamic, context-aware resolution.

Navigating severe technical limitations and complex state-management requirements, I designed a deeply integrated AI experience that actively reduces cognitive load and accelerates time-to-resolution. While the standalone VA was ultimately de-scoped, this foundational work heavily informed the organization's understanding of conversational UX.

✶ Bypassed hardcoded AWS Lex framework constraints to deliver a scalable UI

✶ Integrated Okta backend data to eliminate manual user data entry

✶ Architected complex state-persistence matrices across a sprawling enterprise ecosystem

Role

Team

Scope

The tier-1 bottleneck

Human support agents were overwhelmed with repetitive queries—password resets, basic configuration checks, and warranty status lookups. We needed to conceptualize a digital frontline that could intercept and solve simple issues while intelligently escalating complex ones, shifting the burden from human agents to scalable systems.

Defining the role of AI in enterprise support

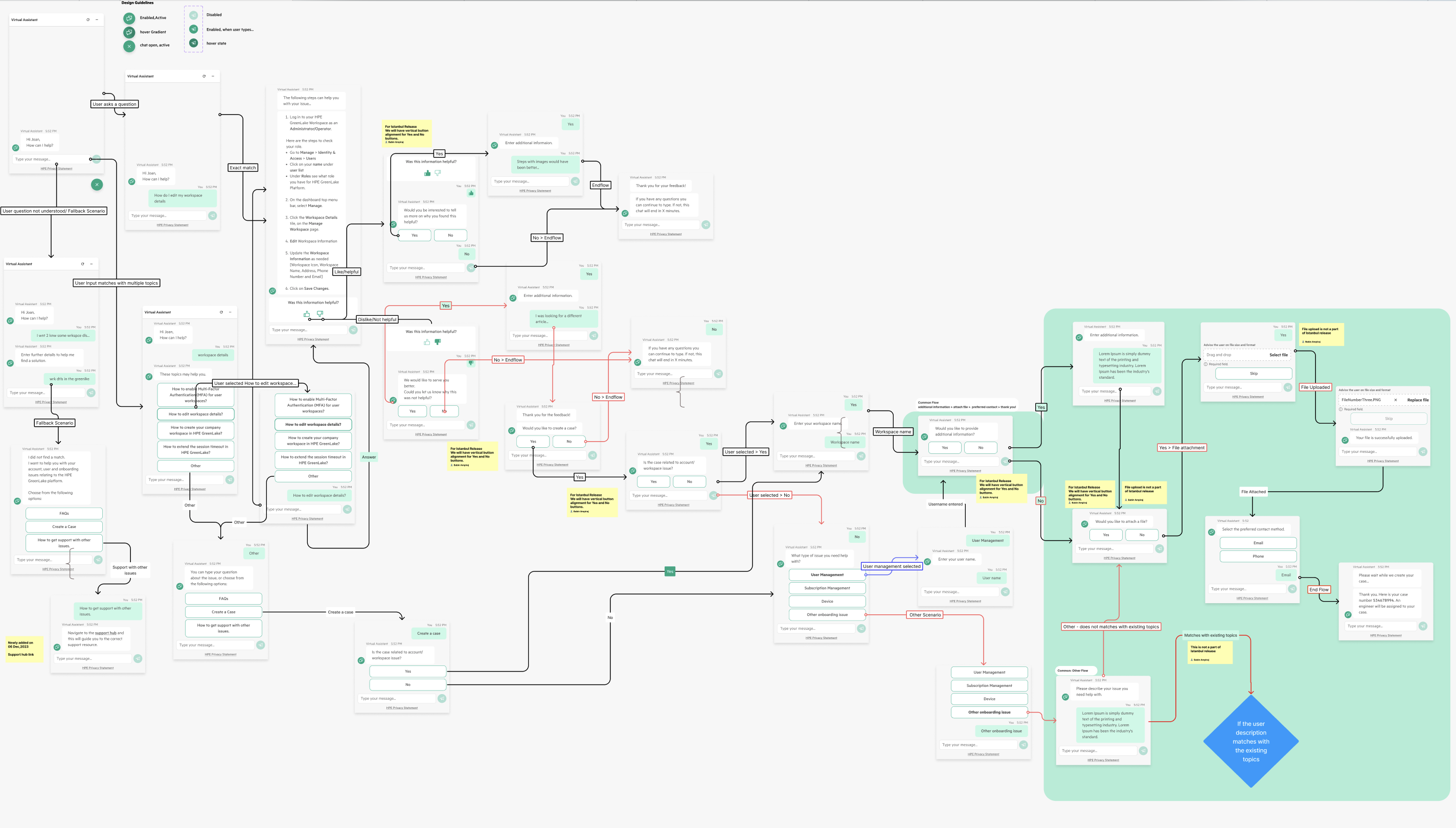

The strategic value of an enterprise Virtual Assistant extends far beyond a simple chat interface; it acts as a primary deflection engine designed to reduce high-cost call center volume. My process began by auditing legacy support data to identify the most frequent, high-friction user journeys. I mapped out comprehensive conversation flows that transformed static troubleshooting into an interactive diagnostic dialogue. By anchoring the design process in business ROI and user telemetry, I ensured the VA wasn't just a novelty feature, but a core strategic asset that guided users to faster resolutions while driving down operational costs.

Mapping out the happy paths and error paths for the top 10 most common user intents.

Establishing the VA's tone of voice—helpful, technical, concise, but strictly non-human.

Translating static docs into interactive dialogue

Displaying dense, technical enterprise documentation inside a narrow 400px chat interface easily leads to cognitive overload. Instead of simply porting over static technical manuals, I fundamentally redesigned the information architecture for a conversational medium. The goal was to shift the user from passive reading to active, guided resolution by structuring complex support matrices into intuitive decision trees.

Conversational wayfinding

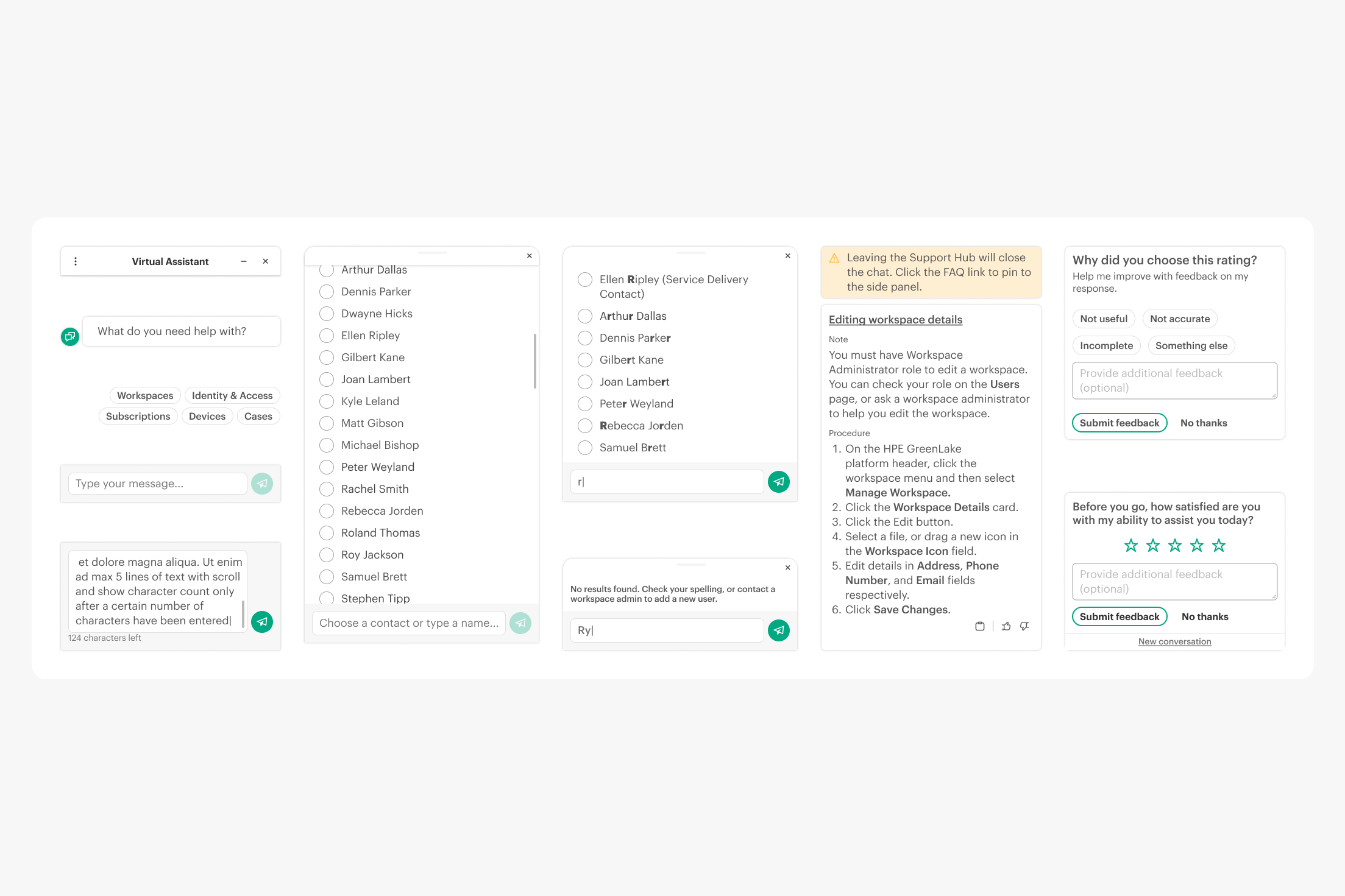

To prevent users from hitting dead ends or needing to type perfect NLP queries, I engineered a robust system of contextual prompt chips. This established a clear scent of information, allowing users to navigate through highly complex, multi-tiered enterprise taxonomies with simple, guided clicks.

Action-oriented toolcards

I shifted the UX from a reading experience to a doing experience. By embedding utility actions—such as one-click copy buttons, deep-links to specific platform pages, and inline feedback tools—directly into the chat responses, the Virtual Assistant actively facilitates the resolution rather than just suggesting it.

Building the conversational framework

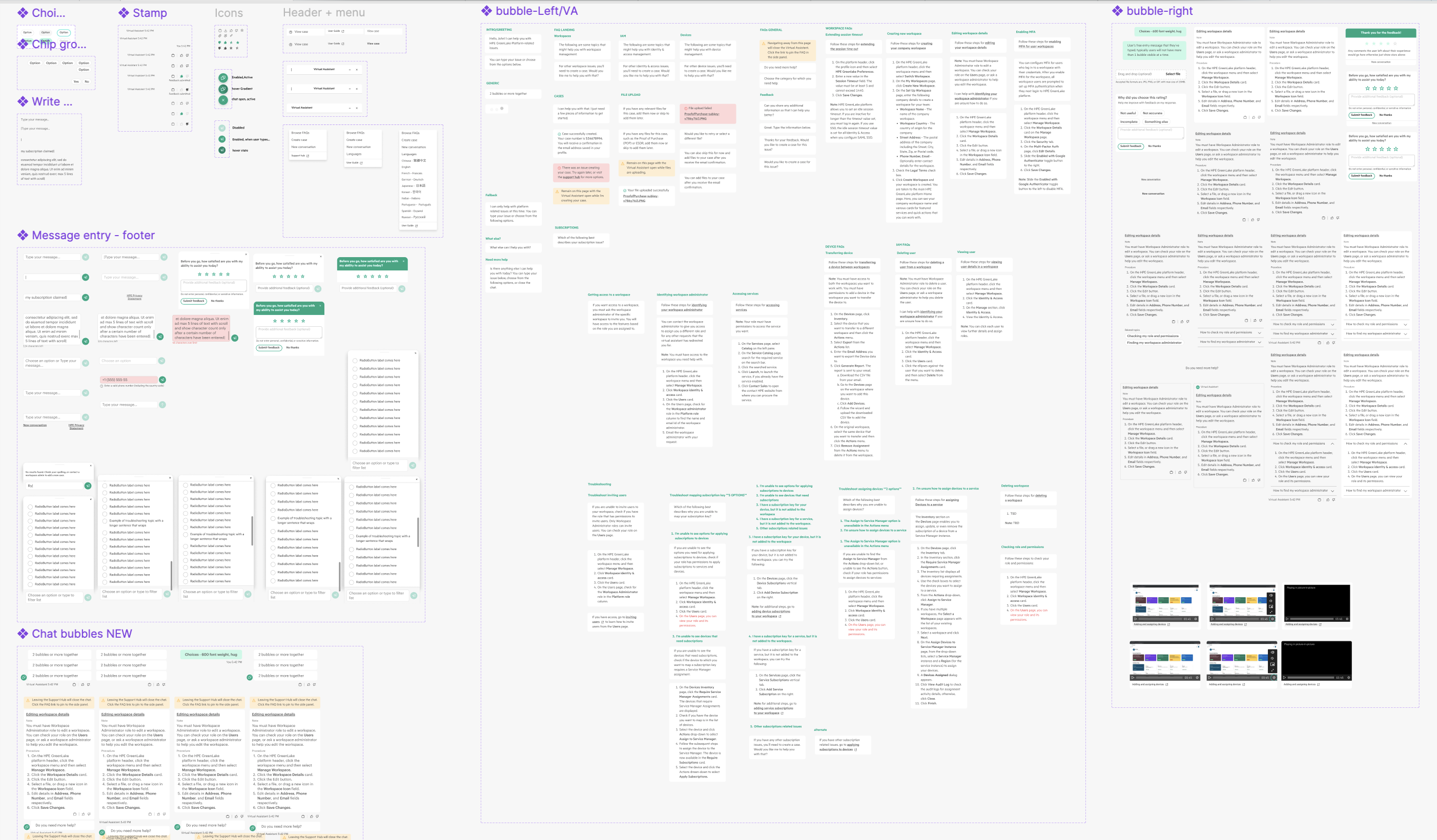

Because a conversational AI had never been natively integrated into the GreenLake platform, the global design system lacked the required UI componentry. I could not rely on existing libraries; I had to invent the framework. I designed a net-new suite of conversational components from scratch—including chat bubbles, selector chips, scrolling lists with auto-suggest and type-to-filter mechanisms, and dynamic inputs—establishing the foundational chat architecture for the entire enterprise.

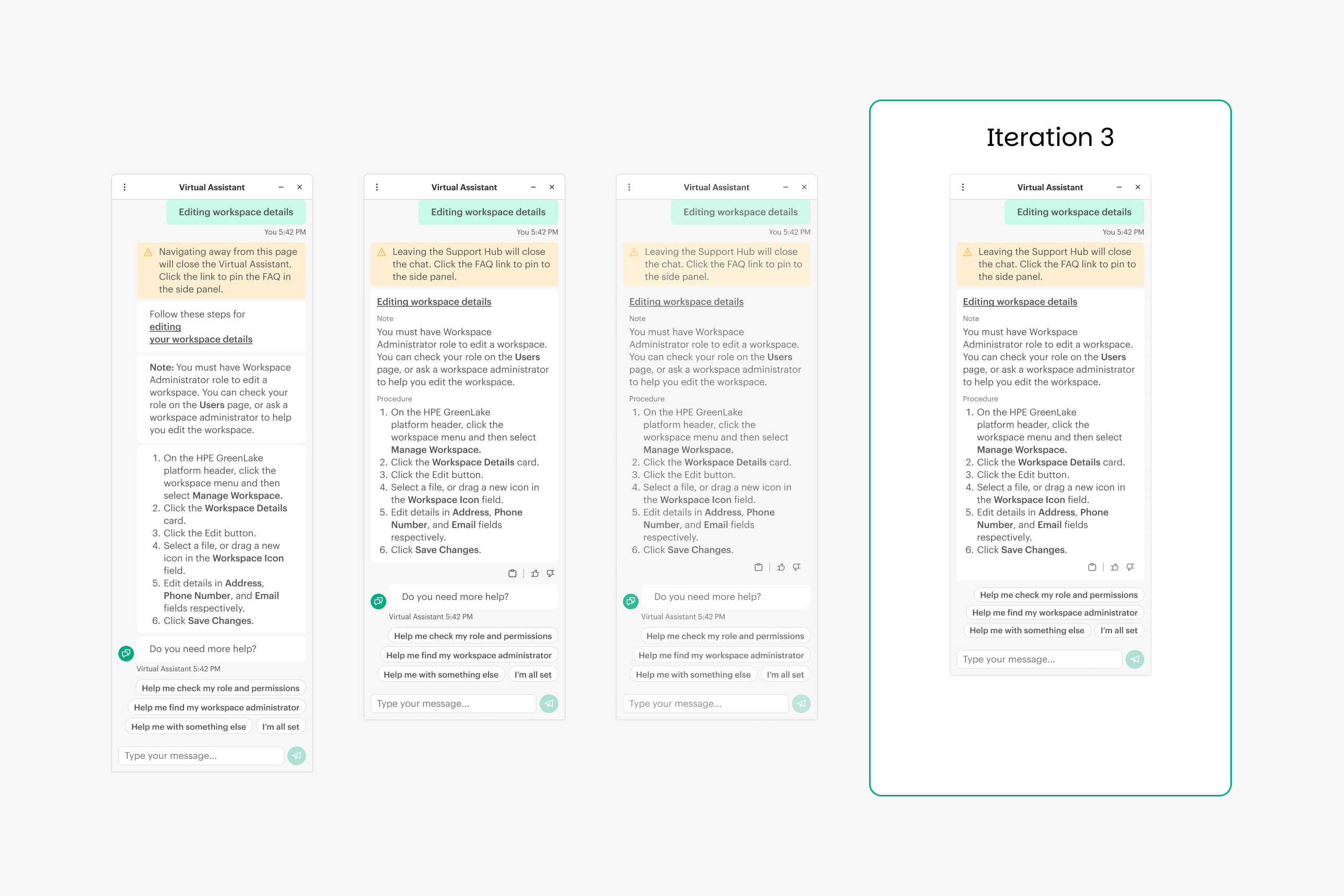

Iterative refinement & ruthless reduction

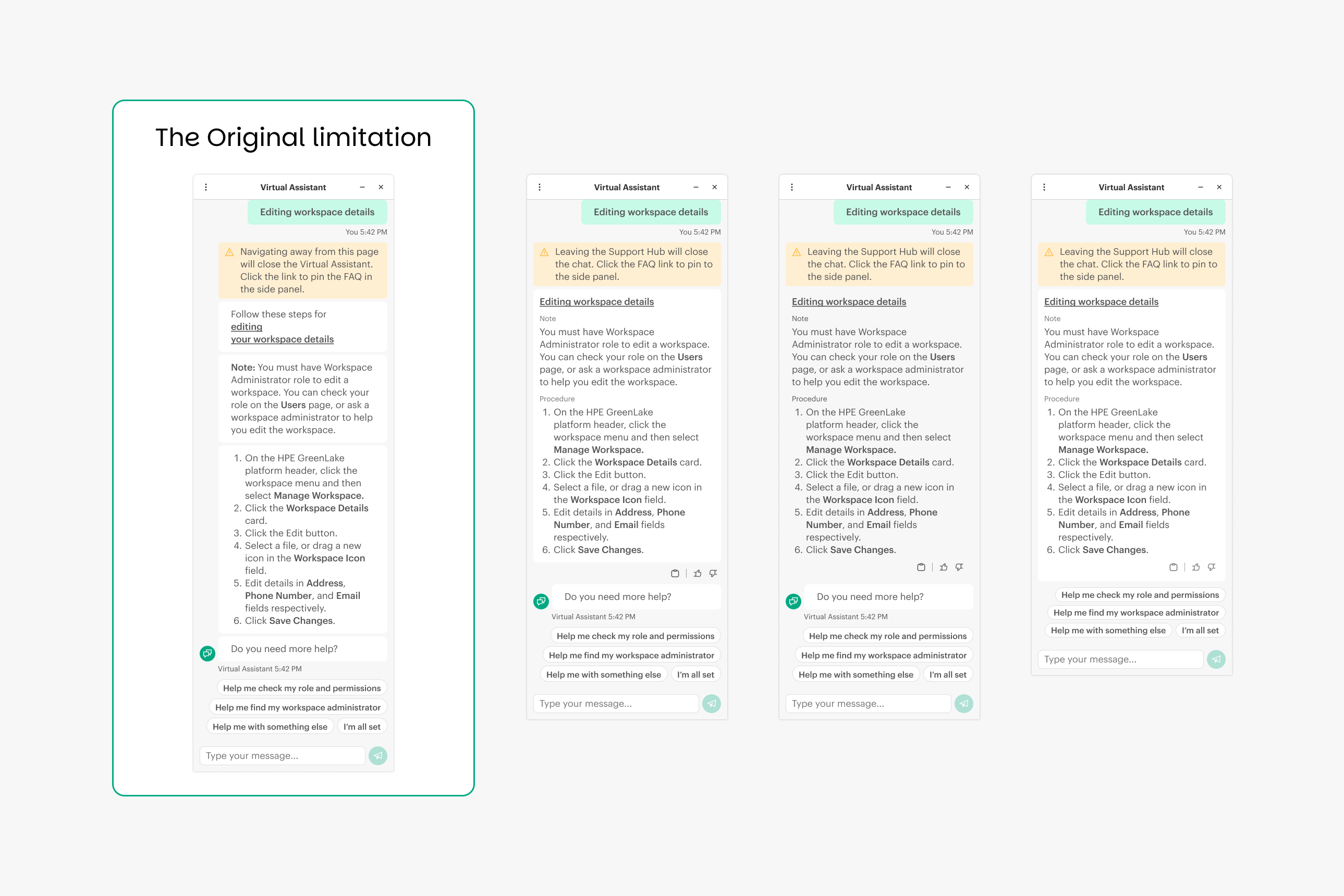

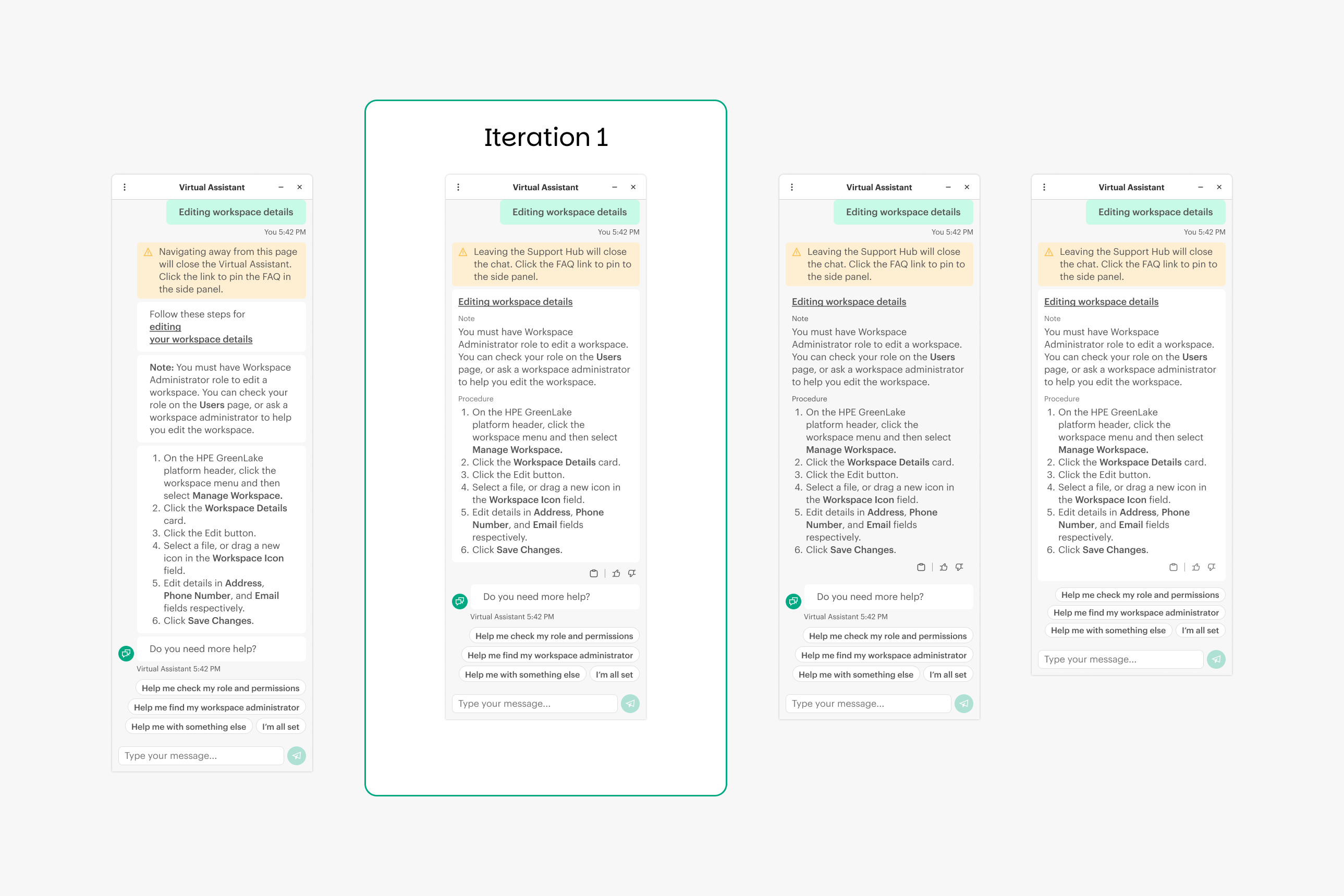

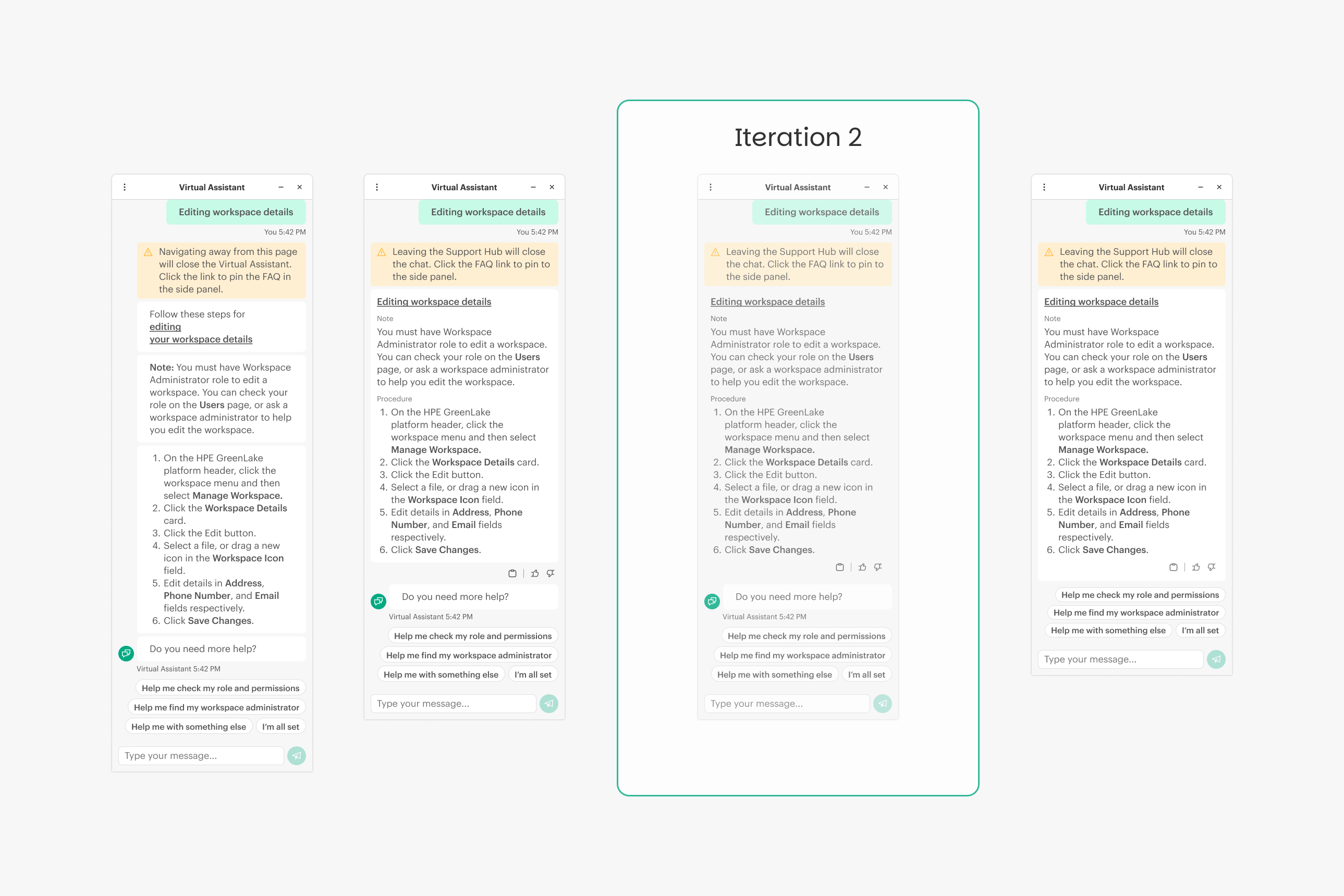

Creating custom components is only half the battle; they must be stress-tested against the spatial realities of a 400px micro-UI. Early testing revealed that our initial FAQ rendering suffered from severe vertical bloat, fragmenting single answers across multiple UI bubbles and forcing users to scroll past the fold to find their next steps. I led a rapid, multi-iteration redesign of these custom components. By ruthlessly consolidating information, converting static text blocks into interactive toolcards, and eliminating redundant conversational filler, I engineered a highly dense, scalable UI component that pulled critical action items back to the forefront.

The initial logic split a single FAQ into five discrete UI elements—a warning banner, a title, a note, a procedure list, and a conversational follow-up ("Do you need more help?"). The excessive margins between these separate bubbles wasted critical vertical space and buried the user's next steps.

I merged the fragmented text blocks into a single, cohesive "Documentation Card." This immediately reduced visual clutter. I also introduced utility icons (Copy, Thumbs Up/Down) directly into the card footer, converting the static text into an interactive tool.

I refined the internal padding and standardized the warning banner to sit flush within the Documentation Card. This strengthened the visual hierarchy, ensuring the user immediately registered the alert before reading the procedural steps.

I updated the global component to align with the new HPE brand standards, introducing the dark green primary header. More importantly, I eliminated the redundant "Do you need more help?" bot bubble entirely. By trusting the user to understand the prompt chips natively, we saved massive vertical space, pulling the action items above the fold and creating a significantly tighter, faster UX.

Architecting around framework limitations

A successful enterprise AI cannot just be an idealized prototype; it must survive the collision with rigid backend infrastructure. Rather than compromising the user experience when faced with AWS Lex framework constraints and complex data routing, I engineered custom UI components and motion logic to bypass technical limitations and maintain a frictionless, deeply integrated user journey.

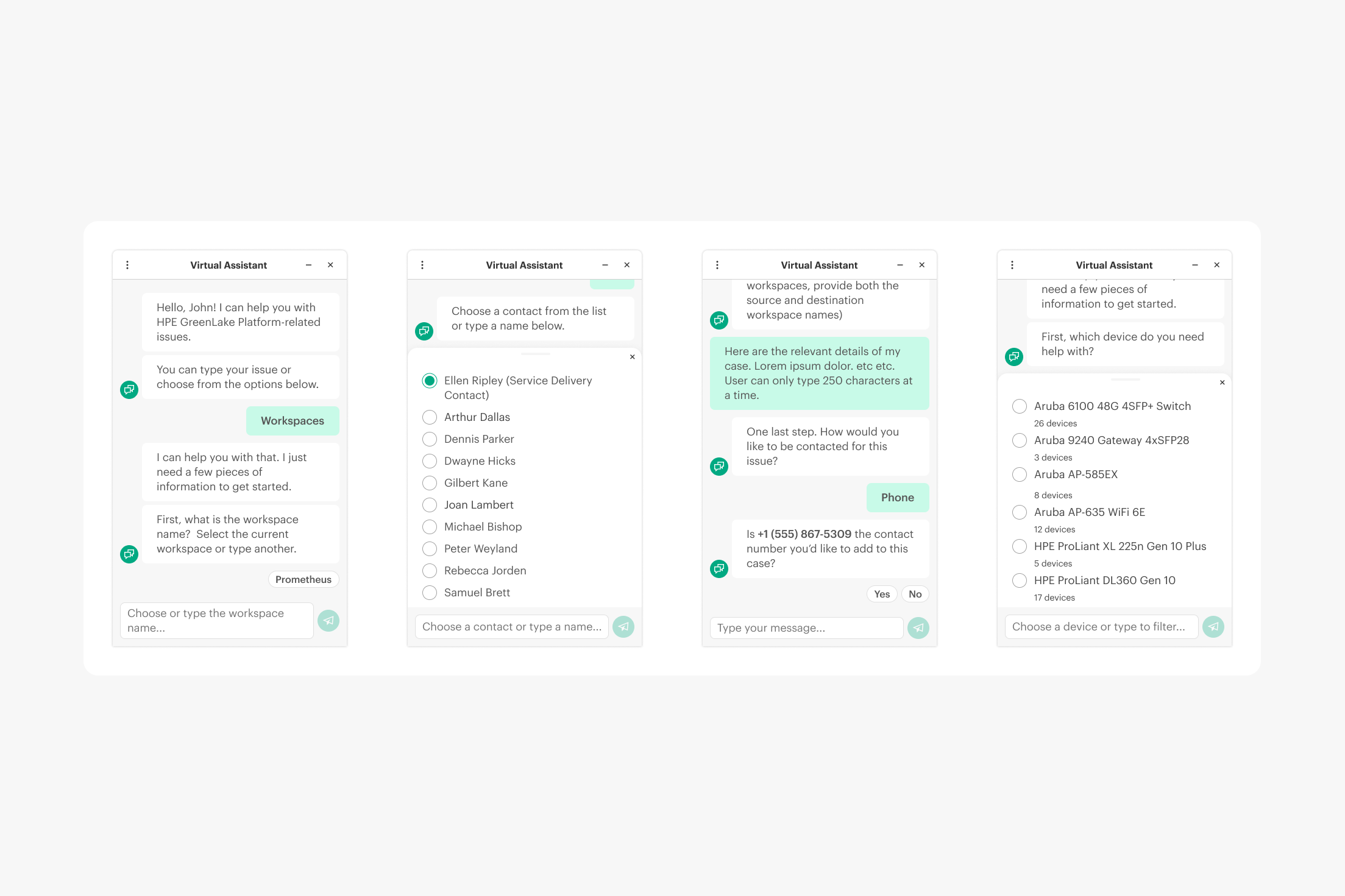

Proactive system integrations

A truly intelligent assistant doesn't ask for data it already possesses. I audited the proposed chat flows and identified multiple areas causing unnecessary user friction. By partnering with engineering to leverage Okta, workspace, and active-session data, I redesigned the initial AI prompts to automatically pre-populate known variables. Instead of forcing the user to manually type their active workspace, Service Delivery Contact, registered phone number, or assets, the Virtual Assistant seamlessly pulls this data from the backend, asking only for a simple confirmation. This drastically shortened the time-to-resolution and made the AI feel genuinely context-aware.

Motion design & conversational pacing

Amazon Lex imposed strict character limits per message, forcing us to fragment complex troubleshooting steps across multiple chat bubbles. To prevent overwhelming the user with an instantaneous wall of text, I engineered a strict motion choreography system. By introducing calculated millisecond delays linked to a dynamic typing indicator, I created a natural conversational cadence that guided the user's eye down the screen without losing context.

Pioneering the next gen of support

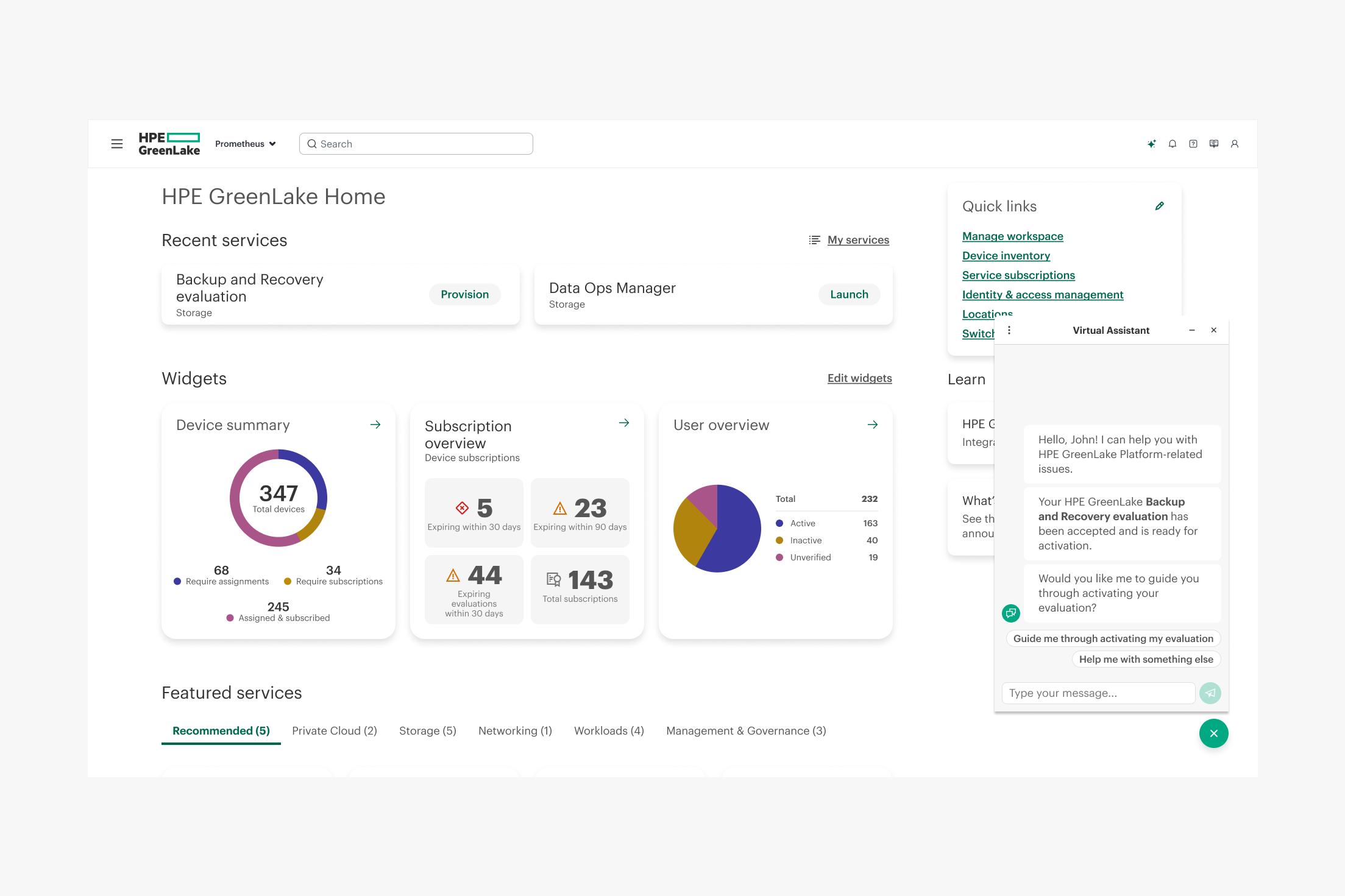

While delivering the MVP, I concurrently conceptualized advanced interaction models to continually mature the platform's AI capabilities and secure stakeholder buy-in for future fiscal years.

A core limitation of traditional enterprise support is the context-switching penalty—forcing users to abandon their active workspace to watch a tutorial video. To solve this, I designed a Picture-in-Picture (PiP) interaction model. When a user launches a video guide within the chat, it seamlessly "pops out" into a resizable, floating window. This enables users to keep the instructional content pinned to their screen while they simultaneously execute the complex steps directly within the platform, effectively transforming the AI from a static reference manual into a dynamic, side-by-side co-pilot.

The MVP established a reactive support model, but the strategic roadmap shifts the AI to a proactive stance. I conceptualized a predictive "listening" framework where the Virtual Assistant detects approaching critical non-error states, such as approaching evaluation or trial license expiration dates. Instead of waiting for a license to fail and forcing the user to open a reactive case, the VA proactively surfaces on the relevant dashboard screen to alert the user, offer direct help to convert or extend, and effectively mitigate churn risk before the user even has to ask.

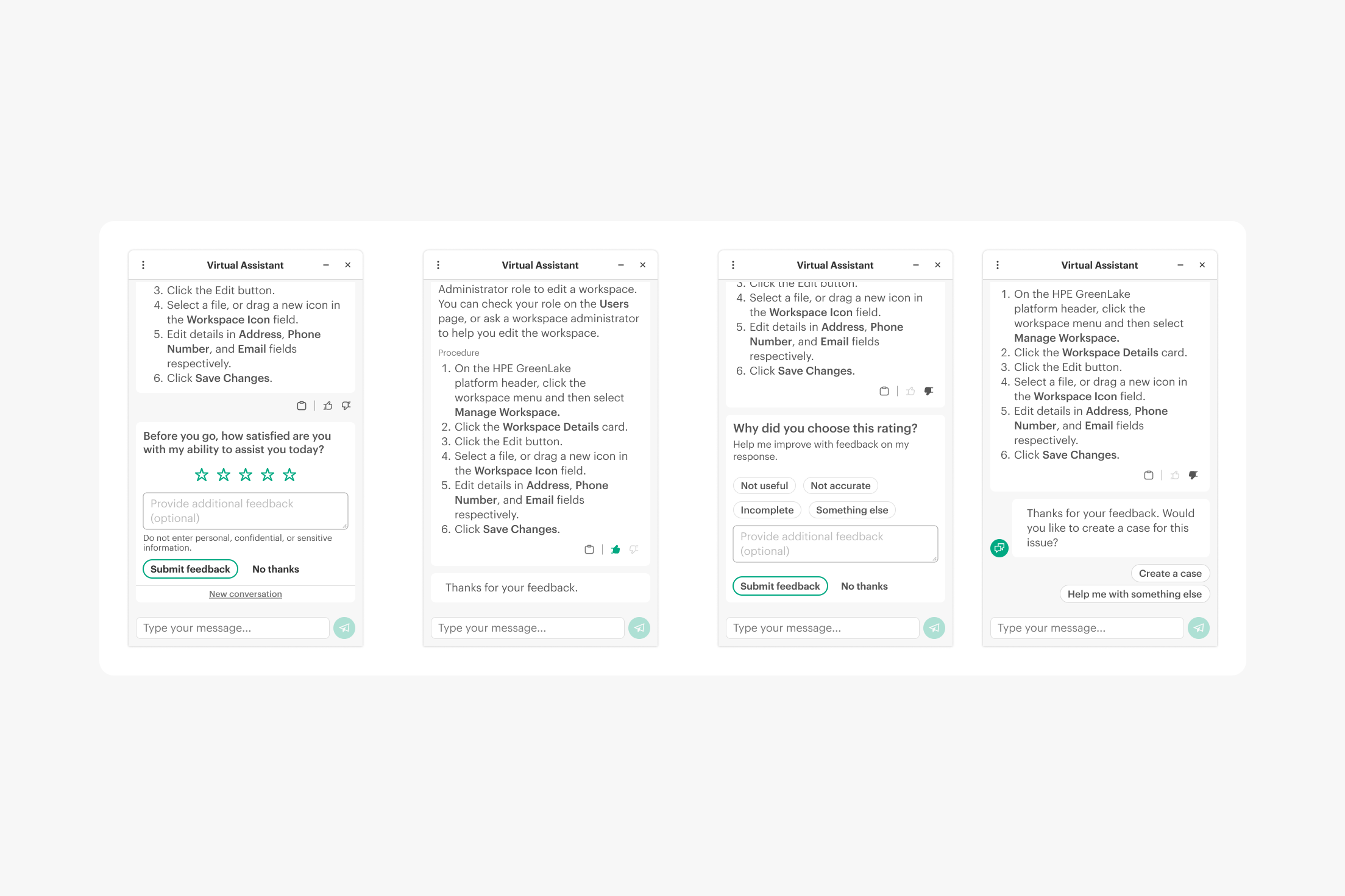

An enterprise AI model cannot mature without a rigorous, frictionless mechanism for measuring its own success. Rather than relying on easily ignored, post-interaction email surveys, I integrated a Qualtrics API micro-survey directly into the natural conclusion of the chat flow (after each FAQ response and when the user exits the chat). By embedding contextual thumbs up/down actions, star ratings, and low-friction qualitative feedback inputs directly within the conversational UI, I established an immediate Voice of the Customer (VoC) data pipeline. This ensured we were continuously capturing high-fidelity, in-the-moment user sentiment to actively train the NLP model and prove ongoing platform ROI.